Guide to Building Intelligent Systems

TL;DR

- Move from deterministic legacy microservices to non-deterministic, agentic architectures.

- Treat AI as the primary infrastructure constraint, not an external service.

- Integrate data as a living participant in the inference process.

- Optimize costs and accuracy by utilizing Domain-Specific Language Models (DSLMs).

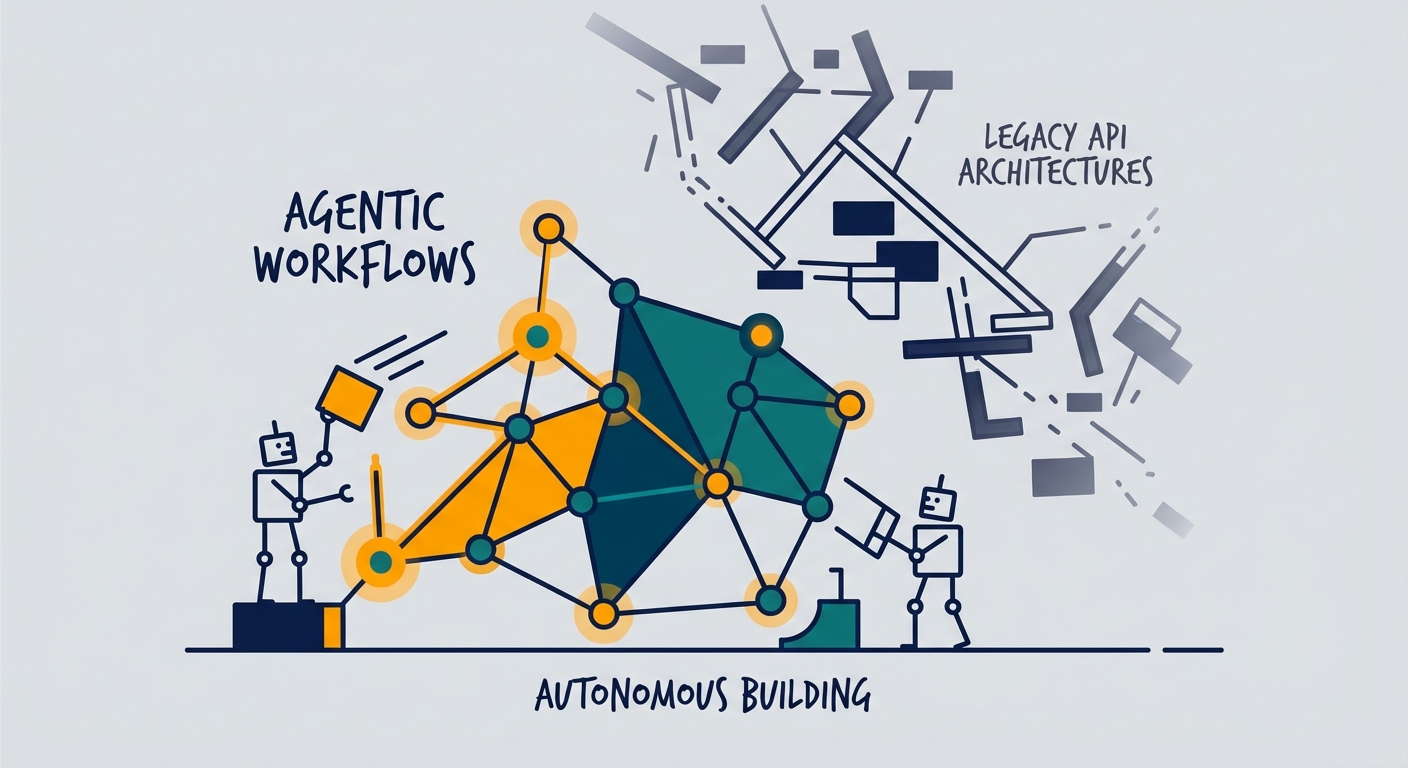

The era of slapping a chatbot onto a legacy backend is officially dead. By 2026, the industry hit a wall: standard API-heavy architectures are buckling under the weight of non-deterministic, agentic workflows.

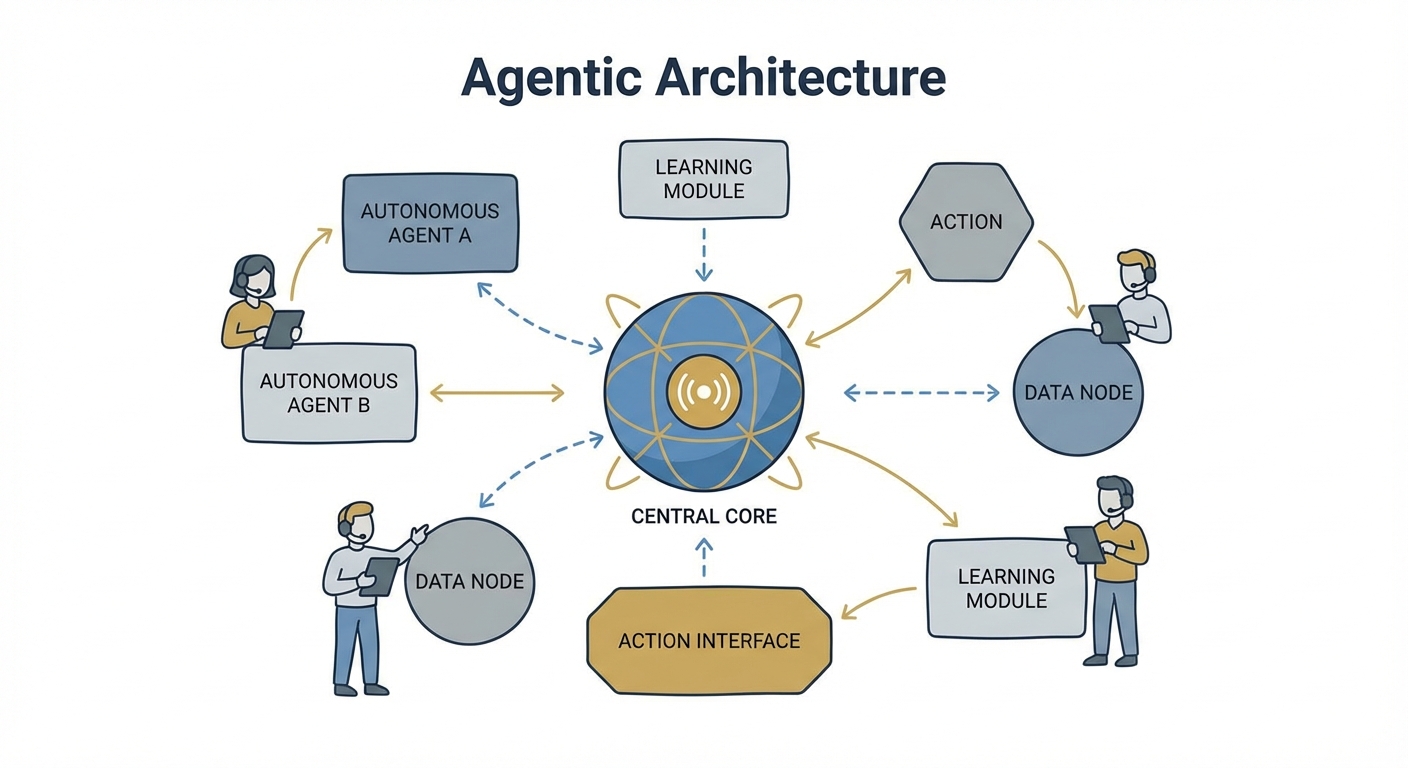

If you’re still treating AI like an external service you just "query," you’re already behind. To build systems that actually work in this new paradigm, you need to stop thinking of AI as a feature and start treating it as the primary constraint of your entire infrastructure. As highlighted in the State of AI Report 2026, the shift toward "AI-Native" design means your compute, data storage, and orchestration layers must be built to support autonomous agents—not just passive, predictable request-response cycles.

Why Traditional System Design Fails the Agentic Era

Legacy microservices were built on one holy rule: code is deterministic. You send a request, the service runs a set of logic, and you get a predictable response. It’s neat. It’s tidy. It’s also completely useless for modern AI.

Intelligent systems are fundamentally non-deterministic. When you introduce an agent—an autonomous entity that plans, uses tools, and iterates—the traditional "API Gateway" becomes a massive bottleneck.

Think about how we used to treat databases. They were static repositories, sitting there waiting to be queried. In an AI-native world, that’s a liability. Data must be a living participant in the inference process. When you force your LLM to bridge the gap to your business logic via rigid API integrations, you’re asking for latency spikes and context-window degradation. You have to move away from rigid, procedural flows and toward an event-driven orchestration layer.

The Four Pillars of AI-Native Architecture

Resilient intelligent systems aren’t just code; they’re evolving ecosystems. You aren't building a product; you’re building an organism. Here is how to keep it alive.

1. Stop Overpaying for Intelligence: The Case for DSLMs

The obsession with using the largest, most expensive foundation models for every single task is a fast track to technical bankruptcy. We are seeing a massive pivot toward Domain-Specific Language Models (DSLMs).

These smaller, precision-tuned models operate on specialized vocabularies and proprietary datasets. They slash inference costs and, more importantly, they actually know what they’re talking about. If you are struggling to maintain accuracy in your specific industry vertical, exploring Custom AI Solution Development is often the missing piece to achieving the precision your users demand. Don't use a sledgehammer to crack a nut.

2. Data Mesh: The AI Backbone

Centralized data lakes are where AI performance goes to die. When data is trapped in a monolithic silo, your feedback loops get stagnant. By adopting the Data Mesh Principles championed by Martin Fowler, you start treating data as a product.

Each domain team owns their data quality. They provide clean, contextualized streams ready for RAG (Retrieval-Augmented Generation). This decentralization is the only way to scale an intelligent system without it collapsing under the weight of messy, inconsistent data.

3. Edge Computing: Speed Over Everything

The cloud isn't always the answer. According to IDC: Edge Computing Trends 2026, the proximity of computation to data generation is now the biggest driver of system efficiency.

If your system requires immediate, context-aware actions, stop sending every single packet to a central server. By pushing inference to the network periphery—using CDNs or localized edge nodes—you kill the round-trip latency that destroys the user experience.

4. Serverless FinOps for AI

High-compute AI tasks can bankrupt a project if left unchecked. You need to integrate serverless patterns that enforce strict FinOps. Use pay-per-use triggers for agentic task execution so you aren't paying for idle GPU time. Modern systems use "lazy evaluation" for their AI components, spinning up compute only when a high-intent task actually demands an agentic hand-off.

The Self-Improving Loop: Building Persistent Feedback

An intelligent system should become smarter the longer it runs. If your architecture doesn't have a built-in mechanism to capture failure cases and feed them back into the training pipeline, you aren't building an "intelligent system." You’re just building a fancy, static product.

The most robust architectures implement a circular dependency. Operational data—user corrections, tool-use failures, and confidence scores—should be automatically tagged and routed back into the fine-tuning process. This creates a "flywheel" effect where the model improves incrementally against your specific business requirements.

Architectural Deep-Dive: The Agentic Request Path

In a mature agentic system, the request path is no longer a straight line. It’s a mess of recursive loops. When a user sends a query, your orchestration layer has to decide: does this need a "fast-path" (cached vector search) or a "deep-reasoning path" (multi-step agent execution)?

RAG sits at the intersection of these paths, and it’s where most people mess up security. You must ensure that the context injected into the prompt is sanitized and governed by the same access control lists (ACLs) as your primary application. If you find yourself hitting walls regarding secure integration, professional AI Consulting Services can help map out these complex data flow topologies to ensure you don’t trade security for speed.

Managing the Cost-Intelligence Matrix

The best architects look at their models like a tiered resource.

- Tier 1 (High Complexity): Use massive, general-purpose models for strategic planning and abstract reasoning.

- Tier 2 (Domain Specific): Use DSLMs for classification, extraction, and routine data processing.

- Tier 3 (Edge/Utility): Use tiny, quantized local models for real-time formatting or simple intent classification.

Map your tasks to the lowest possible tier of model complexity. Over-provisioning your AI isn't a sign of sophistication; it’s a failure of architecture.

Future-Proofing for 2027 and Beyond

The goal for 2027 is clear: moving from passive chatbots to active, autonomous agents. The systems we build today must be capable of handling multi-step, multi-day, or even multi-week workflows that persist across sessions.

As the landscape shifts, your primary defense against obsolescence is a modular, event-driven, and data-centric architecture. Stop focusing on the latest model release. Start focusing on the infrastructure that allows you to swap models in and out as the state of the art evolves.

Frequently Asked Questions

What is the primary difference between traditional system design and AI-native design?

Traditional systems are logic-bound, relying on hard-coded rules and deterministic API paths. AI-native systems are data-and-inference-bound, designed to handle the non-deterministic nature of agents by prioritizing flexible orchestration and continuous feedback loops.

Why are companies moving away from large, general-purpose models toward DSLMs?

Organizations are shifting to Domain-Specific Language Models to contain costs, improve accuracy through domain-specific training, and drastically reduce the hallucinations that occur when a general model attempts to operate on niche, proprietary enterprise data.

How does the "Data Mesh" concept improve AI system performance?

By treating data as a product rather than a raw resource in a central lake, domain teams ensure higher data quality. This "clean" data is then fed into RAG and fine-tuning pipelines, resulting in agents that are significantly more reliable and contextually aware.

How can I design an AI system that remains cost-effective as it scales?

Implement serverless compute patterns to ensure you only pay for inference when it is needed. Additionally, use edge inference to minimize cloud egress costs and prioritize smaller, specialized models for high-frequency tasks to avoid wasting expensive compute on simple queries.