10 Essential AI Hallucination Detection and Mitigation Tools for 2026

As artificial intelligence continues to reshape industries - from healthcare and finance to legal services and digital marketing - one critical challenge remains at the forefront of AI reliability: AI hallucinations. AI hallucinations occur when large language models (LLMs) like ChatGPT, Claude, and Gemini confidently generate false, fabricated, or misleading information that appears entirely factual. From hallucinated citations and made-up statistics to invented legal precedents and fake product data, these AI-generated errors pose serious risks to businesses, researchers, and content creators worldwide. In fact, studies show that even the most advanced LLMs hallucinate between 3% and 27% of the time - making hallucination detection not just a technical concern, but a business-critical priority in 2026.

Fortunately, a powerful new generation of anti-hallucination AI platforms is rising to meet this challenge head-on. Innovative tools like LogicBalls, Perplexity AI, Galileo, Cleanlab TLM, and Anthropic Claude are pioneering groundbreaking approaches - from retrieval-augmented generation (RAG), semantic grounding, and confidence scoring, to clarification-first AI technology, real-time source citation, and trust metrics - all engineered to deliver accurate, verifiable, and hallucination-free AI outputs. Whether you are an AI developer, enterprise content team, legal professional, or digital marketer, this comprehensive guide to the 15 best AI hallucination detection and mitigation tools for 2026 will help you identify the right solution to keep your AI outputs grounded, trustworthy, and factually reliable.

Quick Look: The AI Reliability Stack

Tool | Best For | Free Plan | Starting Price |

|---|---|---|---|

LogicBalls | General Users | Yes | Free Tier Available |

TrueFoundry | Enterprise AI Teams | Demo / Trial Available | Free Tier Available |

LangSmith | Developers | Yes | Free Tier Available |

Galileo | Data Quality & AI Evaluation | Yes | Free Tier Available |

Lakera Guard | Security | Free Trial | Request Quote |

Arize AI | Enterprise Observability | Yes | Free Tier Available |

Maxim AI | Production Monitoring | Free Trial | Free Tier Available |

Evidently AI | Data Scientists & ML Teams | Open Source | Free / Cloud Pricing |

Giskard AI | AI Security Testing | Open Source | Enterprise Pricing |

Langfuse | Open-Source Observability | Yes | $29/month |

1. LogicBalls

Best For: LogicBalls is best suited for content creators, bloggers, business professionals, and non-technical users who need hallucination-free AI outputs, semantically grounded responses, and factually verified content without complex prompt engineering. It is also ideal for freelancers, marketers, and small business owners seeking an affordable platform for accurate, bias-free, and AI hallucination-resistant content generation at scale.

Pros:

Its clarification-first technology eliminates hallucinations at the prompt level before they occur, ensuring every output is contextually accurate and semantically grounded.

Its library of 5,000+ specialized AI tools keeps the AI focused within well-defined, purpose-built contexts, dramatically reducing fabricated facts and misleading information.

The user-friendly interface and generous free tier 2,000+ AI tools, 14,000 AI words/month, and 3 free AI models makes hallucination free AI writing accessible to everyone without technical expertise.

Cons:

The platform is primarily a SaaS writing tool rather than a developer-grade evaluation library, making it less suitable for AI engineers needing deep API access or custom hallucination benchmarking.

Unlike competitors such as Perplexity AI, LogicBalls lacks real-time web citations or live source verification, limiting its use in high-stakes professional and enterprise environments.

The absence of explicit confidence scoring or numerical trust metrics limits its usefulness for teams requiring measurable, auditable hallucination risk assessments.

Pricing

Free Plan: $0/month includes 2,000+ AI tools, 14,000 AI words/month, and 3 free AI models.

Pro Plan: Starts from $5.00/month (billed annually) expanded tools, higher word limits, and priority access.

Elite & Enterprise Plans: Custom pricing full suite access, team collaboration, higher word limits, and dedicated enterprise support.

2. TrueFoundry

Best For: TrueFoundry is best suited for enterprise AI/ML teams, data science leaders, and AI infrastructure engineers who need a secure, scalable, and governance-ready platform for deploying hallucination-controlled LLM agents. It is ideal for organizations operating in regulated industries like healthcare, finance, banking, and government that require SOC 2, HIPAA, and GDPR-compliant AI deployments with full observability and audit logging.

Pros

Its AI Gateway with prompt lifecycle management versions, monitors, and enforces prompt behavior across agents directly reducing hallucination risk by ensuring consistent, controlled, and repeatable LLM outputs at every stage of deployment.

The platform offers full agent observability and immutable audit logging tracing every step from prompt execution to tool and model output with metrics, latency tracking, and outcome monitoring, giving enterprise teams complete visibility into where and why hallucinations may occur.

TrueFoundry's real-time policy enforcement and RBAC governance ensures that AI agents operate strictly within defined boundaries, preventing data drift, unauthorized model usage, and hallucination-prone generalized responses across large enterprise teams.

Cons

TrueFoundry is a highly technical, developer-focused platform with a steep learning curve, making it unsuitable for non-technical users, content creators, or small business owners who need simple, accessible hallucination-free AI writing tools.

The platform is primarily focused on infrastructure and deployment governance rather than end-user content generation, meaning it does not directly produce or verify factual written outputs the way tools like LogicBalls or Perplexity AI do.

Pricing is not publicly listed for most plans, and the platform is geared toward enterprise contracts making it inaccessible for startups, freelancers, or budget-conscious teams seeking affordable AI hallucination mitigation solutions.

Pricing

Free Trial / Live Demo: Available via sign-up at with access to a live product tour and demo environment.

Starter / Growth Plan: Custom pricing based on compute usage, model deployments, and team size available upon request.

Enterprise Plan: Full suite with VPC/on-prem deployment, SOC 2 & HIPAA compliance, SSO, RBAC, audit logging, 24/7 SLA-backed support, and dedicated enterprise onboarding pricing available on request via sales team.

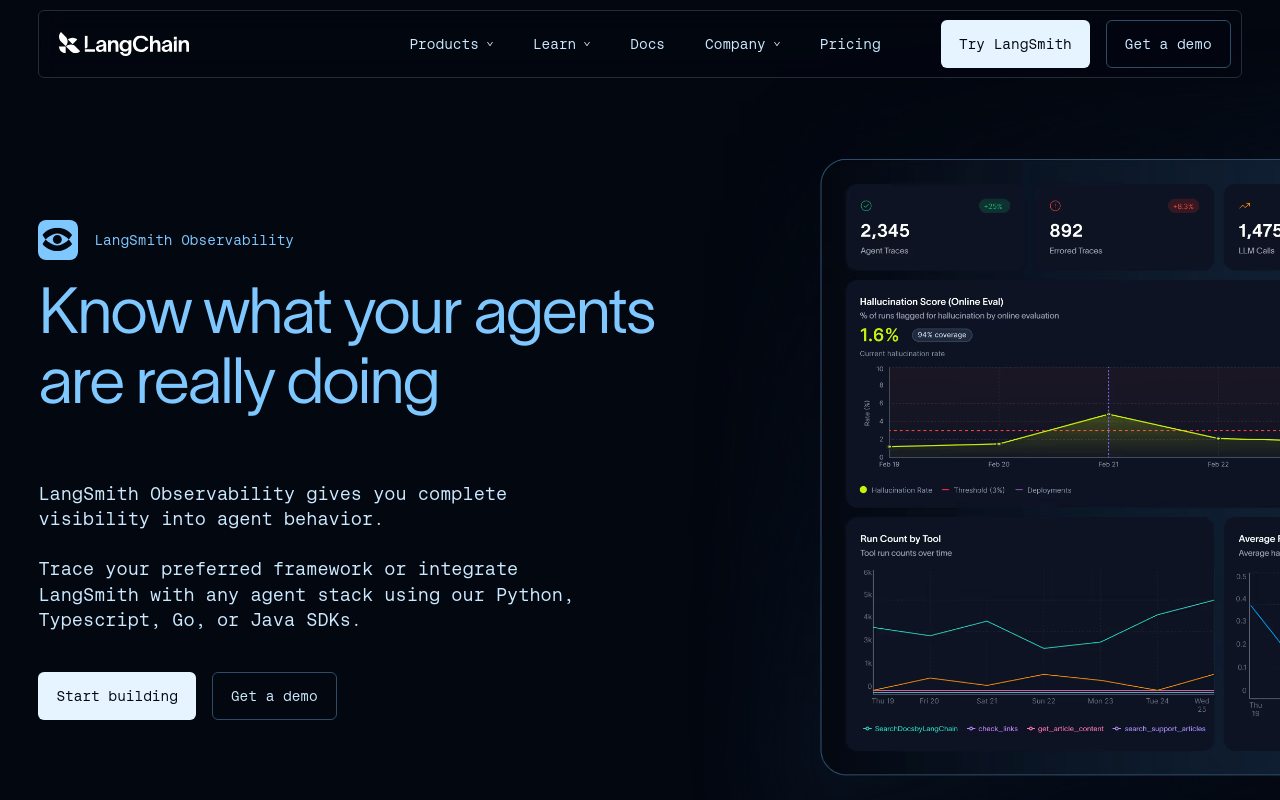

3. LangSmith

Best For: LangSmith is best suited for AI developers, ML engineers, and enterprise LLM teams who need deep observability, hallucination detection, and evaluation capabilities for production-grade AI applications. It is ideal for teams already building with LangChain or LangGraph frameworks who need end-to-end tracing, automated hallucination testing, and real-time monitoring of LLM outputs at scale.

Pros

LangSmith's evaluation framework supports multiple evaluator types including LLM as judge evaluators, human annotation queues, heuristic checks, and pairwise comparisons with the ability to write custom evaluators in Python or TypeScript for hallucination detection and guardrails validation, making it one of the most comprehensive hallucination testing platforms available for developer teams.

LangSmith's full agent observability traces every step from prompt to tool and model execution with metrics, latency, and outcomes, with its built-in AI assistant Polly helping teams quickly understand large traces and pinpoint hallucination-prone failure points.

LangSmith is framework-agnostic working with any LLM framework through Python and TypeScript SDKs and OpenTelemetry support and offers cloud, bring-your-own-cloud (BYOC), and self-hosted deployment options for teams with strict data residency and compliance requirements.

Cons

LangSmith integrates most deeply with LangChain and LangGraph, meaning if you are not using LangChain, much of the platform's value disappears framework-agnostic tracing exists but requires significantly more setup compared to native LangChain integration.

The platform is heavily developer-focused with a technical learning curve, making it entirely unsuitable for non-technical users, content creators, marketers, or business professionals who simply need hallucination-free AI writing without engineering overhead.

Pricing escalates quickly at scale the Plus tier runs $39 per user per month with only 10,000 traces included making it potentially expensive for large teams running high-volume LLM applications in production.

Pricing

Developer Plan: Free includes 1 seat, 5,000 base traces per month, access to LangSmith observability and evaluation tools, ideal for solo developers and personal projects.

Plus Plan: Starts at $39/user/month includes 10,000 base traces per month, unlimited seats, team collaboration features, and 1 free dev-sized agent deployment.

Enterprise Plan: Custom pricing includes self-hosted Kubernetes deployment, advanced security, SSO, RBAC, HIPAA/SOC 2 compliance, dedicated support, and SLA-backed uptime guarantees.

4. Galileo

Best For: Galileo is best suited for AI engineers, ML teams, and enterprise organizations building and deploying production-grade LLM applications and agentic AI systems that require real-time hallucination detection, automated evaluation pipelines, and production guardrails. It is ideal for teams in regulated and high-stakes industries such as finance, healthcare, and enterprise technology who need measurable, auditable, and continuously improving AI reliability at scale.

Pros

Galileo's eval-to-guardrail lifecycle means pre-production evaluations automatically become production governance eval scores control agent actions, tool access, and escalation paths with no glue-code required, making it one of the most seamless hallucination prevention pipelines available for enterprise AI teams.

Its insights engine analyzes agent behavior to identify failure modes, surface hidden patterns, and prescribe actionable fixes for example, detecting that a hallucination caused incorrect tool inputs and recommending adding few-shot examples to correct it, dramatically accelerating debugging and reducing time-to-production.

Galileo supports SaaS, Virtual Private Cloud, and on-premises deployment options, giving enterprise teams complete flexibility over data residency, security, and compliance requirements making it suitable for organizations with the strictest data governance and hallucination audit requirements.

Cons

Galileo is a highly technical, developer-focused evaluation platform that requires significant engineering expertise to set up and maintain, making it entirely unsuitable for non-technical users, content creators, or small business owners seeking simple hallucination-free AI writing tools.

The Pro plan starts at $100/month billed annually with pricing that scales based on number of traces, which can become expensive quickly for teams running high-volume LLM applications making it less accessible for startups and budget-conscious teams.

While Galileo excels at evaluation and observability, it is not an end-user AI writing or content generation tool meaning organizations need additional platforms alongside Galileo to actually produce hallucination-free AI content outputs for business use cases.

Pricing

Free Plan: $0/month includes 5,000 traces per month, unlimited users, and unlimited custom evals, ideal for developers and small teams experimenting with hallucination detection.

Pro Plan: $100/month (billed annually) includes 50,000 traces per month, standard RBAC, advanced analytics and insights, and dedicated Slack support, with pricing scaling based on number of traces.

Enterprise Plan: Custom pricing includes unlimited traces, custom rate limits, hosted/VPC/on-prem deployment, enterprise-grade RBAC and SSO, real-time guardrails, 24/7 support, low-latency dedicated inference servers, and forward deployed engineering support.

5. Lakera Guard

Best For - Lakera is best suited for enterprise security teams, AI developers, ML engineers, and DevOps professionals building and deploying production-grade GenAI applications that require real-time protection against prompt injection attacks, jailbreaks, data leakage, and toxic content generation. It is ideal for organizations in regulated industries such as banking, healthcare, and financial services that need an AI-native security layer to prevent hallucination-inducing prompt attacks and maintain compliance with global AI safety standards.

Pros

Lakera's defenses are trained on a massive proprietary dataset of real-world attacks powered by its Gandalf platform, which has generated over 80 million data points from more than 1 million users giving it unmatched, battle-tested intelligence against prompt injection, jailbreaking, and semantic abuse that leads to hallucinated outputs.

Lakera offers a model-agnostic design that works with any LLM including GPT, Claude, Gemini, and custom models combined with its AI Model Risk Index that scores LLMs on a 0-100 risk scale based on adversarial attack performance, giving security teams actionable data to select the safest, most hallucination-resistant model for their use case.

Lakera delivers industry-leading precision that reduces risks by 3–4 orders of magnitude through its context-aware approach, with central policy controls that let teams customize security policies horizontally across applications without changing any code.

Cons

Lakera is a security and threat prevention platform, not an AI content generation or hallucination evaluation tool meaning it prevents prompt-level attacks that cause hallucinations but does not evaluate, score, or detect hallucinations in AI-generated outputs the way tools like Galileo or LangSmith do.

The platform is highly developer and security engineer focused, requiring API integration and technical expertise that makes it inaccessible for non-technical users, content creators, or small business owners looking for simple hallucination-free AI writing solutions.

Enterprise pricing is not publicly listed and is custom-quoted based on request volume and organizational scope making it difficult for startups and budget-conscious teams to evaluate costs upfront without going through a sales process.

Pricing

Community Plan: $0/month includes up to 10,000 API requests per month with prompts up to 8,000 tokens each, designed for individual developers and researchers testing the platform. Not recommended for live production use.

Enterprise Plan: Custom pricing includes flexible request volumes, configurable prompt sizes, enterprise-grade SLAs, dedicated support, SaaS and self-hosted deployment options, SSO, compliance controls, and full access to Lakera Guard and Lakera Red products. Pricing available on request via the sales team.

6. Arize AI

Best For - Arize AI is best suited for enterprise AI engineering teams, ML engineers, data scientists, and LLMOps professionals who need a unified platform for building, evaluating, observing, and improving AI agents and LLM applications in production. It is ideal for organizations at companies like PepsiCo, Siemens, Microsoft, TripAdvisor, and DoorDash that require petabyte-scale AI observability, hallucination detection, and evaluation infrastructure to maintain trustworthy, high-performing AI systems at scale.

Pros

Arize's Alyx AI engineering agent makes agents self-improving by using evaluations and annotations to automatically optimize prompts directly addressing one of the most common root causes of AI hallucinations by continuously refining prompt quality based on real production data and evaluation feedback

Arize offers a fully open-source foundation through Phoenix OSS, built on OpenTelemetry with no proprietary frameworks and no data lock-in giving teams complete transparency into evaluation models and hallucination detection logic, along with the flexibility to self-host for full data sovereignty.

Arize provides a comprehensive evaluation suite covering offline LLM-as-judge evaluators, code evals, online session evaluations, agent path evaluations, human annotation queues, labeling queues, and user feedback tracking making it one of the most complete hallucination detection and measurement platforms available for production AI teams.

Cons

The AX Free plan is significantly limited offering only 25,000 trace spans per month, 1 GB ingestion, and just 7 days of data retention which is insufficient for meaningful hallucination trend analysis or production-level LLM monitoring, forcing most real-world teams to upgrade quickly to paid plans.

Arize is a highly technical, developer and enterprise-focused platform with significant onboarding complexity making it entirely unsuitable for non-technical users, content creators, marketers, or small business owners seeking simple and accessible hallucination-free AI writing tools without engineering expertise.

While Arize excels at observability and evaluation, it is not an AI content generation tool and does not actively block hallucinations in real time the way security-focused platforms like Lakera do teams must build their own guardrail and intervention logic on top of Arize's monitoring and evaluation infrastructure.

Pricing

Phoenix (Open Source): Free fully self-hosted and open-source with user-managed trace spans, ingestion volume, projects, and retention. Ideal for small teams and developers who need complete data sovereignty.

AX Pro: $50/month includes 50,000 trace spans/month, 10 GB ingestion/month, 15 days retention, higher rate limits, and email support. Additional spans available at $10 per million and additional storage at $3 per GB.

7. Maxim AI

Best For: Maxim AI is best suited for AI engineers, product managers, and cross-functional AI teams at growing startups and enterprises who need an end-to-end platform to simulate, evaluate, and observe AI agents with speed and reliability. It is ideal for teams building RAG pipelines, voice agents, multi-agent workflows, and LLM-powered applications that require systematic hallucination detection, automated CI/CD evaluation pipelines, and real-time production monitoring all without requiring deep prompt engineering expertise from every team member.

Pros

Maxim's agent simulation engine tests AI agents across diverse scenarios with AI-powered simulations and user personas enabling teams to proactively detect hallucination-prone failure modes before deployment, rather than discovering them in live production where they can cause real business damage.

Maxim is purpose-built for cross-functional collaboration, with a no-code intuitive UI that empowers product managers and non-technical team members to define, run, and analyze hallucination evaluations independently without waiting on engineering democratizing AI quality management across the entire organization.

Maxim is fully compliant with SOC 2 Type II, ISO 27001, HIPAA, and GDPR standards and supports In-VPC deployment for organizations with strict data residency requirements ensuring that hallucination detection and AI observability workflows never compromise enterprise security or regulatory compliance.

Cons

The Developer (free) plan is significantly limited capping users at 3 seats, 10,000 logs per month, and only 3 days of data retention which is insufficient for meaningful hallucination trend analysis or production-level AI monitoring, pushing most real-world use cases quickly toward paid plans.

The per-seat pricing model starting at $29/seat/month for Professional and $49/seat/month for Business can become expensive for larger teams, especially for organizations that need to give broad access to multiple product, engineering, and QA stakeholders involved in hallucination review and evaluation workflows.

Maxim, while powerful for agent evaluation and observability, does not offer active real-time hallucination blocking or prompt-level security guardrails the way platforms like Lakera or Galileo Protect do meaning it detects and alerts on hallucinations after they occur rather than preventing them at the inference layer.

Pricing

Professional Plan: $29/seat/month billed monthly - includes unlimited seats, up to 3 workspaces, 100,000 logs per month, 7-day data retention, simulation runs, online evaluations, and email support, with a 14-day free trial available.

Business Plan: $49/seat/month billed monthly - includes unlimited workspaces, up to 500,000 logs per month, 30-day data retention, RBAC support, PII management, scheduled runs, custom dashboards, and private Slack support, with a 14-day free trial available.

8. Evidently AI

Best For: Evidently AI is best suited for data scientists, ML engineers, MLOps teams, and AI product builders who need a transparent, open-source-powered platform to evaluate, test, and monitor LLM applications, RAG pipelines, AI agents, and traditional ML models throughout their entire lifecycle. It is ideal for teams at companies like DeepL, Wise, Plaid, and Flo Health who require continuous hallucination detection, data drift monitoring, adversarial testing, and production-grade AI observability - all built on a trusted open-source foundation with over 100 built-in metrics.

Pros

Evidently's open-source Python library provides 100+ built-in metrics covering hallucinations, factuality, RAG retrieval quality, PII detection, toxicity, sentiment, and custom LLM-as-judge evaluations - giving teams a transparent, extensible, and freely available foundation for hallucination detection that can be customized to any AI use case without vendor lock-in.

Evidently supports a uniquely broad range of AI testing use cases in a single platform - from RAG hallucination testing and adversarial red teaming to **AI agent multi-step workflow validation and traditional ML model drift monitoring - making it one of the few tools that serves both generative AI and predictive ML teams within the same organization under one unified observability framework.

Evidently's LLM-as-judge evaluation framework supports customizable judge templates implementing best practices like chain-of-thought prompting, allowing teams to add their own plain-text evaluation criteria and choose different LLMs for assessment - giving organizations full control over how hallucinations are defined, detected, and measured according to their specific domain and use case requirements.

Cons

The open-source (OSS) version requires teams to be fully self-sufficient in deploying, managing, troubleshooting, and scaling the platform within their own environment - including backups, upgrades, and scaling - with only documentation and a Discord community forum for support , making it unsuitable for teams without dedicated ML engineering or DevOps resources.

Evidently AI is a deeply technical, code-first platform designed for data scientists and ML engineers - making it entirely inaccessible for non-technical users, content creators, marketers, or business professionals who need simple hallucination-free AI writing tools without any Python or infrastructure expertise.

While Evidently excels at evaluation, testing, and observability, it does not offer active real-time hallucination blocking, prompt-level security guardrails, or LLM gateway controls - meaning it identifies and monitors hallucination risks but does not prevent them from reaching end users at the inference layer the way platforms like Lakera or Galileo Protect do.

Pricing

Evidently OSS (Open Source): Free - the core Evidently Python library is open-source under the Apache 2.0 license, ideal for individual data scientists and small teams running evaluations independently in a Python environment. A lightweight OSS platform deployment is also available for storing and visualizing evaluation results.

Evidently Cloud: Paid plans available - the full cloud-based collaborative AI observability platform includes no-code evaluation workflows, role-based access control, alerting, user management, a scalable backend, and dedicated support. Pricing tiers scale based on usage volume and team size, available on request via the pricing page.

Evidently Enterprise (Self-Hosted): Custom pricing - designed for teams with strict security requirements, offering a full-featured platform equivalent to Evidently Cloud deployed in private clouds or on-premises, with dedicated onboarding, training sessions, and direct access to the developers who built the platform.

9. Giskard AI

Best For - Giskard AI is best suited for enterprise AI security teams, ML engineers, domain experts, and product managers in regulated industries who need a proactive, continuous red teaming and LLM evaluation platform to detect hallucinations, security vulnerabilities, and quality failures in AI agents before they reach production. It is ideal for organizations in banking, finance, retail, and manufacturing - including teams at L'Oréal, AXA, BNP Paribas, Société Générale, Michelin, Decathlon, and Google DeepMind - that require the highest standards of AI safety, GDPR compliance, and sovereign data infrastructure for their LLM deployments.

Pros

Giskard automatically converts detected vulnerabilities and hallucinations into reproducible test suites that continuously enrich your golden test dataset - transforming one-time discoveries into permanent regression protection that prevents the same hallucinations from reoccurring after every model update, prompt change, or new deployment.

Giskard's Human-in-the-Loop visual dashboards unify business stakeholders, engineers, and security teams around a common collaborative interface for reviewing, customizing, and approving AI tests - making hallucination detection and AI quality a shared organizational goal rather than an isolated engineering concern.

As a European-built platform with data residency and isolation guarantees, a zero-training policy protecting customer IP, end-to-end encryption, native GDPR compliance, SOC 2 Type II, and HIPAA certification - Giskard offers the strongest data sovereignty and regulatory compliance posture of any AI hallucination testing platform available for enterprise teams operating under strict European and global data protection regulations.

Cons

Giskard's pricing is structured as a simple Free (open-source) vs. Enterprise model with no mid-tier self-serve paid plan meaning growing teams and mid-market companies that have outgrown the open-source version must immediately engage the enterprise sales team, with no transparent pricing available publicly and no affordable stepping stone between free and full enterprise.

The Giskard Hub currently supports only conversational AI agents in text-to-text mode accessible through an API endpoint limiting its applicability for teams building multimodal, voice-based, or non-conversational AI systems that also require hallucination detection and quality evaluation.

Giskard is a pre-deployment and continuous testing platform focused on vulnerability detection and evaluation - it does not provide real-time inference-layer hallucination blocking, LLM gateway controls, or live production guardrails the way platforms like Lakera or Galileo Protect do, meaning it must be paired with a separate runtime protection solution for complete end-to-end hallucination prevention.

Pricing

Free (Open-Source): $0 - includes the open-source Python library with local deployment, basic LLM vulnerability scanning using adversarial techniques, basic RAG evaluation reports using correctness metrics, best-effort maintenance, and community support. Designed for solo developers and individual LLM experiments.

Enterprise (Giskard Hub): Custom pricing - includes comprehensive agent-specific LLM vulnerability scanning with 50+ automated adversarial probes including multi-turn attacks, regular updates with the latest adversarial techniques, OWASP alignment, tool calling security validation, customizable scenario-based generation, fine-grained RAG quality metrics, Human-in-the-Loop review dashboards, SSO and RBAC, CI/CD integration, hybrid deployment options (on-premise, private cloud, or SaaS), data residency and isolation, zero-training policy and IP protection, SOC 2, HIPAA and GDPR compliance, dedicated support with SLAs, and optional consulting on agent corrections and custom AI guardrails.

10. Langfuse

Best For - Langfuse is best suited for content creators, bloggers, business professionals, and non-technical users who need hallucination-free AI outputs, semantically grounded responses, and factually verified content without complex prompt engineering. It is also ideal for freelancers, marketers, and small business owners seeking an affordable platform for accurate, bias-free, and AI hallucination-resistant content generation at scale.

Pros

Langfuse is fully open-source and self-hostable, giving teams complete data sovereignty and the ability to run the entire hallucination observability stack within their own infrastructure - making it the top choice for organizations with strict data residency, compliance, or privacy requirements that cannot send LLM traces to third-party cloud platforms.

Langfuse offers a comprehensive evaluation suite including LLM-as-judge evaluators, human annotation queues, custom evaluation scores, external evaluation pipelines, and user feedback tracking - covering the full spectrum of hallucination detection methods from automated scoring to expert human review in a single unified platform.

Langfuse provides transparent, usage-based pricing starting completely free with 50,000 units per month on the Hobby plan, scaling to $29/month for Core and $199/month for Pro - with generous discounts available for startups, educational users, and open-source projects, making enterprise-grade hallucination observability accessible at every budget level.

Cons

The Hobby plan limits users to just 2 seats and 30 days of data access, and the free tier's ingestion throughput is capped at 1,000 requests per minute - which can be restrictive for growing teams or production applications with high-volume LLM traffic that need longer data retention for hallucination trend analysis.

Langfuse is a developer-first, technical platform that requires engineering expertise to set up, integrate, and maintain - making it entirely unsuitable for non-technical users, content creators, or business professionals who need simple hallucination-free AI writing tools without any coding or infrastructure overhead.

While Langfuse excels at tracing and observability, it does not offer built-in real-time production guardrails or active hallucination blocking - meaning teams must build their own guardrail logic on top of Langfuse's evaluation infrastructure to actively prevent hallucinations from reaching end users.

Pricing

Core Plan: $29/month - includes 100,000 units/month, 90 days data access, unlimited users, in-app support, and additional usage at $8 per 100,000 units with volume discounts available.

Enterprise Plan: $2,499/month - includes everything in Pro plus audit logs, SCIM API, custom rate limits, uptime SLA, support SLA, dedicated support engineer, and optional yearly commitment with custom volume pricing, architecture reviews, and AWS Marketplace billing.

Frequently Asked Questions

What is an AI hallucination?

An AI hallucination occurs when a generative model produces information that is factually incorrect, nonsensical, or not supported by the underlying data, yet presents it with a high degree of confidence. This happens because LLMs are designed to predict the next likely token in a sequence based on training patterns rather than verifying facts against a verified source of truth.

How do I measure the reliability of my AI system?

You can measure reliability by implementing automated evaluation frameworks that compare model outputs against ground truth datasets or by using LLM-as-a-judge techniques. Tools like RAGAS and DeepEval allow developers to quantify metrics such as faithfulness, answer relevance, and context precision, providing a score that helps identify when a model is drifting into inaccuracies.

Is it possible to stop hallucinations completely?

While it is currently impossible to eliminate hallucinations entirely in non-deterministic generative models, you can significantly mitigate them using RAG (Retrieval-Augmented Generation) architectures and proactive guardrails. By providing the model with verified, context-specific documents and using security layers like Lakera Guard to filter inputs and outputs, businesses can reduce the frequency of errors to an acceptable production threshold.

Why do I need observability tools if my AI works fine in testing?

AI models often behave differently in production environments compared to controlled testing scenarios due to varying user inputs and evolving data distributions. Observability tools allow you to track performance in real-time, log traces for debugging, and detect drift, ensuring that your system remains accurate and reliable even as your user base and data complexity grow.