We’re witnessing the most radical shift in software architecture since the cloud killed the desktop app. For the last couple of years, we’ve been playing with "chatbots"—passive little widgets that summarize emails or spit out quick answers. They were fun, but they were essentially just toys.

Now, we’re entering the era of the autonomous operator.

An autonomous agent isn't just a chatterbox. It’s a system that can look at its surroundings, reason through a messy, multi-step problem, and pull the levers on its own tools to get the job done—all without a human holding its hand. We’re moving away from software that just talks to software that actually does.

If early Generative AI implementations were like a nervous intern constantly asking for permission, the new generation is a seasoned digital employee. It navigates APIs, updates databases, and—crucially—fixes its own mistakes when things go sideways.

Why the sudden pivot?

The industry finally hit a wall. Enterprise leaders are finished with "hallucinating" prototypes. For two years, we obsessed over parameter counts and benchmark scores, acting like bigger models were the only way to win. That era is dead. Today, the only thing that matters is reliability.

An agent is only as good as its ability to finish a task—like reconciling a complex ledger or auditing a software deployment—without face-planting halfway through.

The "plumbing" of the internet has finally caught up to the reasoning power of LLMs. We’re moving toward "Headless AI." In this world, agents don’t need a human-friendly browser interface. They talk directly to your CRM, your ERP, and your cloud infrastructure via APIs. By cutting the human out of the click-path, we’re finally removing the single biggest bottleneck in digital productivity.

The Engine Room: How Agents Actually Work

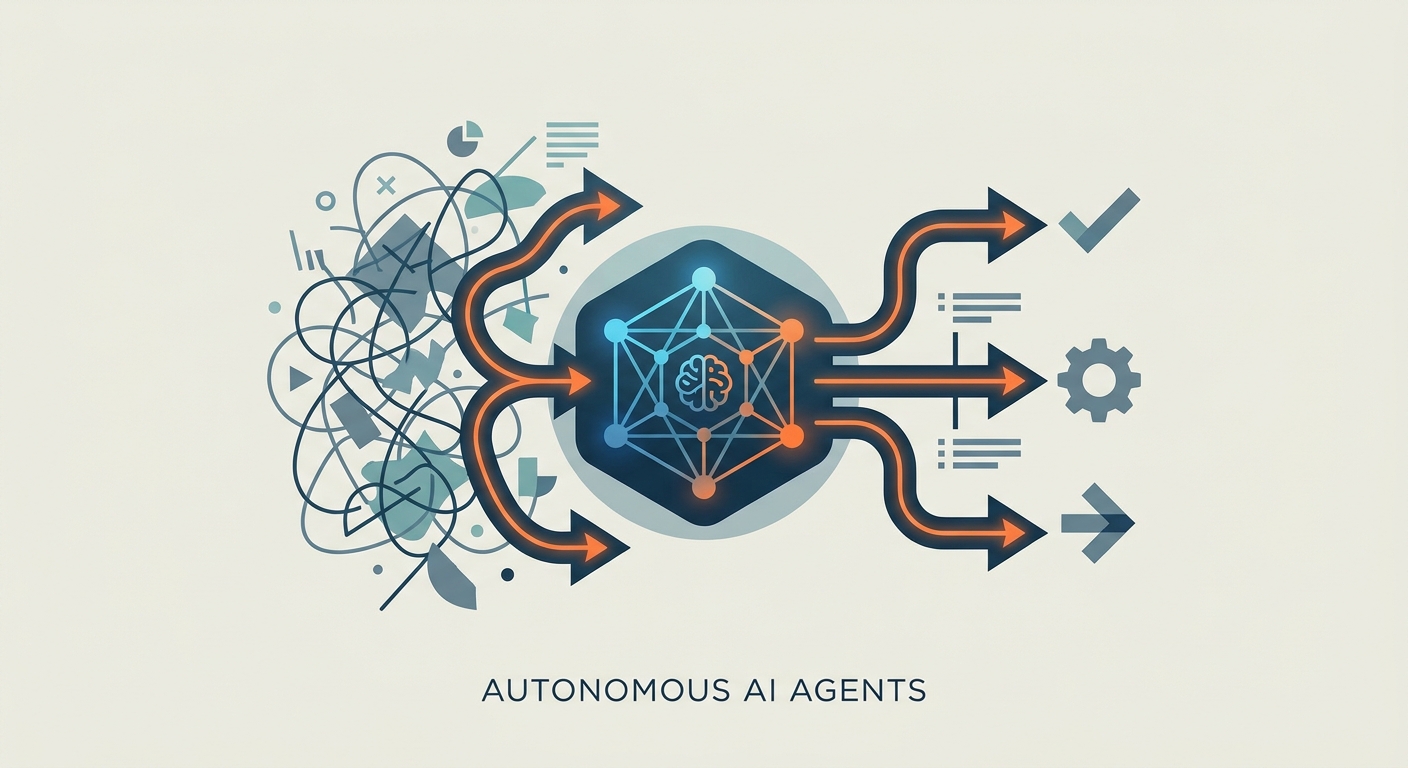

At the heart of every agent is a cycle often called the "Thought-Action-Observation" loop.

Standard chatbots are glorified autocomplete; they just predict the next word. An agent is different. It’s architected to pause, reflect, and iterate. It perceives a task, breaks it down into logical chunks, executes a tool (like an API call), and then stops to check the output. If the result is garbage, it loops back, changes the plan, and takes another swing at it.

This iterative loop is the secret sauce. It’s what separates a fragile, scripted automation—which breaks the moment a data field changes—from an autonomous agent that can actually handle ambiguity.

The Pillars of 2026-Grade Agents

Let’s be clear: "prompt engineering" is table stakes. If your agent is failing, it’s rarely because you didn't say "please" in your prompt. It’s because the agent is starving for context.

1. Context Engineering

If you want better performance, stop obsessing over the prompt and start obsessing over the data architecture. This is "Context Engineering." It’s about building high-fidelity retrieval systems that give your agent a firm grasp of your company’s internal logic, past audit logs, and API constraints. When an agent has access to a structured, clean knowledge graph, its reasoning improves exponentially. It stops guessing and starts knowing.

2. Deterministic Guardrails

LLMs are probabilistic by design. That’s great for writing poetry, but it’s a nightmare for accounting. In high-stakes fields like law or finance, you can’t afford "creative" math. Companies are increasingly adopting Salesforce Agentforce Trends 2026 to wrap these probabilistic models in hard-coded, deterministic logic. Think of these as "no-go" zones. If an agent tries to authorize a payment that breaks a compliance rule, the system triggers a hard-stop. It doesn't matter what the LLM thinks is right; the guardrail is the law.

3. Multi-Agent Orchestration

Expecting one agent to do everything is a recipe for failure. It’s like asking a CEO to also handle the accounting, the coding, and the janitorial work. Instead, current architectures favor a hierarchy. A "Manager" agent decomposes a high-level goal and farms it out to specialists: a "Researcher" for data, a "Coder" for execution, and a "Reviewer" for quality control.

It’s just a digital version of a standard office hierarchy. It allows you to optimize each agent for one specific, narrow competency.

Connecting to the Real World

The biggest headache in agent deployment has always been the "Bespoke Integration Tax." Developers spent years writing custom glue code to connect every new tool to their workflows.

Thankfully, the industry is rallying around the Model Context Protocol (MCP). It’s an open standard that gives agents a universal way to talk to any repository or tool. The Agentic AI Foundation is essentially building the "USB port" for AI. Once everyone is speaking the same language, developers can stop wasting time on proprietary plumbing and focus on building actual value.

The Risks: What Could Go Wrong?

Autonomy comes with a price.

"Tool Poisoning" is a real threat. If an attacker feeds malformed input to an agent, they might trick it into executing unauthorized API calls or scraping sensitive data. Because these agents often have high-level permissions, you need to be militant about the "principle of least privilege." Don't give an agent access to everything if it only needs to touch one database.

Then there’s the latency trap. If an agent spends too long "thinking," it might time out or lock up critical system resources. You have to balance the depth of reasoning against the speed of the business.

Finally, every action needs a paper trail. When an agent slips up, you can’t just look at a chat log. You need the full audit history: the reasoning process, the tools invoked, and the specific data that led to that bad decision.

How to Get Ready

Preparation starts with the foundation of your data. If your internal APIs are a mess and your documentation is a ghost town, your agents will be effectively blind. Spend the next few months cleaning your internal house. Standardize those endpoints.

When you start building your stack, prioritize modularity. Don't lock yourself into one model or one framework. Use tools that let you swap out the backend without tearing down your entire logic layer.

If you want to see an immediate win, start small. Find a high-frequency, low-complexity task where AI productivity tools can take the load off your team. Let the machines handle the rote execution so your people can finally focus on the stuff that actually requires a human touch.

Frequently Asked Questions

What is the main difference between a chatbot and an autonomous agent?

Chatbots are designed to provide information through a conversational interface, whereas autonomous agents are designed to execute actions and solve complex, multi-step tasks by interacting directly with software tools and APIs.

Why are "deterministic guardrails" necessary for AI agents?

LLMs are probabilistic and can occasionally produce inaccurate content. Deterministic guardrails provide a hard layer of logic—independent of the AI—to ensure that agents strictly follow compliance rules and safety protocols during mission-critical operations.

Can autonomous agents work together?

Yes. Through multi-agent orchestration, specialized agents with distinct competencies, such as a "researcher" and a "reviewer," can collaborate on a single project, allowing for greater accuracy and more sophisticated problem-solving.

What is "Context Engineering" and why does it matter?

Context engineering is the practice of structuring data and retrieval systems so an agent has the precise information needed to make accurate decisions. It matters because it shifts the focus from "writing better prompts" to building a robust data architecture that supports reliable agent performance.

What is the Model Context Protocol and how does it simplify agent integration?

The Model Context Protocol (MCP) is an open standard that provides a universal way for agents to connect with external tools and data sources. It reduces the need for bespoke, one-off integrations, allowing developers to build agents that are compatible with a wide range of enterprise software.