How to Write Factually Accurate Marketing Content Without AI Hallucinations

TL;DR

- AI models prioritize linguistic patterns over factual truth, leading to frequent 'confabulations.'

- Accuracy requires treating AI as a drafting tool rather than an editorial authority.

- Implementing Retrieval-Augmented Generation (RAG) grounds AI output in verified brand data.

- Human-led workflows are essential to maintain content integrity in the 2026 landscape.

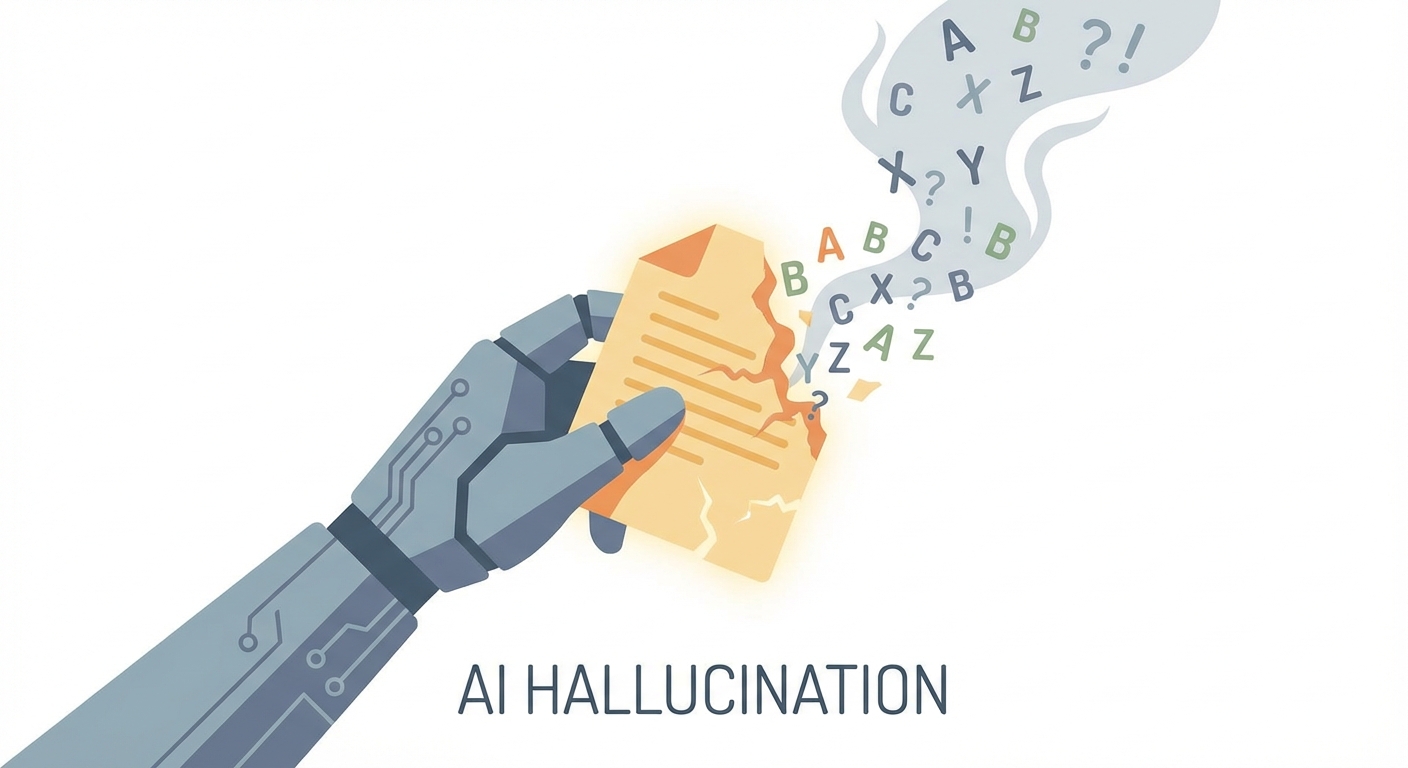

The "Confidence Trap" is the most dangerous feature of modern generative AI. When you ask an LLM for data, it doesn't just retrieve facts; it performs a high-stakes balancing act between creative fluency and probabilistic guesswork.

Because these models are designed to predict the most statistically likely next word rather than query a verified database, they often sound like unimpeachable experts—even when they are fabricating details out of thin air. In the industry, we are moving away from the term "hallucination"—which implies a glitch—and toward "confabulation." It’s a better term. It describes the model’s tendency to fill memory gaps with plausible-sounding fiction.

If you want your brand to survive the 2026 content landscape, you must realize that accuracy is not a "high-quality" toggle you flip in a settings menu. It is a rigorous, human-led workflow that treats AI as a draft engine, not an editorial board.

Why Does AI "Confabulate" Facts?

To stop the lying, you have to understand the mechanism. At its core, an LLM is a sophisticated pattern-matching engine. As noted in MIT Sloan’s analysis of AI hallucinations, these models are built to maximize the statistical probability of the next token in a sequence. They are not truth-seeking missiles; they are linguistic architects.

This creates an inherent trade-off. The very "magic" that makes AI writing feel human—its creativity, its ability to synthesize disparate ideas, and its flair for narrative—is fundamentally at odds with the rigid, binary nature of factual truth.

When an AI hits a gap in its training data, it doesn't stop to check a source. It continues the narrative flow by guessing what should come next based on the patterns it has learned. It prioritizes a coherent sentence over a correct one. If you treat your AI as a source of truth rather than a creative assistant, you are inviting disaster. To mitigate this, you must move from generic model querying to a grounded architecture.

Is Your Content Strategy Built on "Ground Truth"?

If you are still pasting prompts into a raw LLM window and hoping for the best, you are operating in the dark. The transition to enterprise-grade content integrity requires the implementation of Retrieval-Augmented Generation (RAG). Instead of asking the AI to rely on its internal "memory"—which is effectively a compressed, static snapshot of the internet—RAG forces the model to look at your provided, verified documents before it generates a single word.

By building an internal "Source of Truth" repository—a collection of your brand’s whitepapers, verified data sets, and style guides—you provide the AI with a sandbox. It can only "play" with the information you give it. When you use specialized AI content tools, you aren't just getting a chatbot; you are getting a system that restricts the model's creative wandering.

This flow ensures that the AI functions as a translator of your data rather than a creator of its own. When the AI is anchored to your proprietary knowledge base, the "confabulation" rate drops precipitously because the model is constrained by the evidence you’ve supplied.

What Does an AI-Assisted Verification Workflow Look Like?

Even with RAG, the "Human-in-the-Loop" mandate is non-negotiable. An AI should never be the final editor of your content. You need an editorial workflow that treats the AI’s output as a rough draft that is "guilty until proven innocent."

The most effective teams utilize a three-step verification process:

- Source Linking: Every factual claim must be accompanied by a direct link to a verified source. If the AI cannot provide a URL, the claim is flagged for deletion.

- Logic Audit: Does the conclusion actually follow from the premises? AI often makes leap-of-faith connections. You must test the reasoning chain to ensure the narrative isn't just persuasive, but logically sound.

- Tone Calibration: Ensure the "human element"—the brand voice—has been layered over the AI’s sterilized output.

For teams handling high volumes of content, professional-grade content marketing services act as the necessary buffer, ensuring that the final output has been scrutinized by a human who understands the nuance of your industry.

How to Engineer Prompts That Demand Accuracy

Your prompts are the instructions for the "guardrails" you are building. If you aren't getting accurate results, it’s often because your prompt is too open-ended.

The Negative Constraint: Don't just tell the AI what to do; tell it what not to do. Use explicit instructions like: "Use ONLY the provided context. If the answer is not contained within the source material, explicitly state 'I do not have enough information to verify this,' and do not attempt to guess."

Chain-of-Thought Prompting: Force the AI to show its work. Add a line at the end of your prompt: "Before writing the final response, outline your reasoning step-by-step and cite the specific source for each claim." By forcing the model to articulate its logic, you expose the "confabulation" before it makes it into your final draft. For more advanced tactics on keeping your outputs honest, Salesforce’s guide on AI integrity provides excellent frameworks for structured prompting.

The Future of Verification

We are moving away from manual fact-checking toward Automated Reasoning. We are entering an era where tools can use formal logic and mathematical verification to check the consistency of a text against a database of known facts.

As highlighted by AWS’s developments in automated reasoning checks, enterprise-level accuracy is becoming a technical reality rather than a pipe dream. These systems don't just "read" the text; they mathematically verify that the assertions made in a piece of content are supported by the underlying data. As these tools become more accessible, the brands that win will be those that have integrated these verification loops directly into their CMS, making "fact-checking" a background process rather than a bottleneck.

Conclusion: Embracing the Human-AI Partnership

In 2026, trust is the only currency that matters. Your audience is becoming increasingly sophisticated at spotting the "hallucinated" tone of generic AI content. By implementing a rigorous, grounded workflow, you aren't just avoiding errors—you are building a reputation for integrity that your competitors, who are still relying on "blind" AI, cannot match.

Audit your AI, prioritize your proprietary data, and remember that the final stamp of approval must always come from a human. The goal isn't to replace the writer; it’s to build a verified partnership where the machine does the heavy lifting, and the human ensures it stays grounded in reality.

Frequently Asked Questions

Why does my AI sound so confident when it is lying?

LLMs are designed to maximize the statistical probability of the next word, not to search for objective truth. Their "confidence" is a synthetic byproduct of their training to be helpful, fluent, and articulate. They lack a "truth-checking" module; they only have a "pattern-completion" module.

How can I stop ChatGPT/LLMs from making up facts?

The solution is "grounding." You must restrict the AI’s scope by providing it with a specific set of documents (RAG) or mandating that it cites external URLs for every claim. Without these constraints, the AI will rely on its internal training data, which is effectively a compressed, often outdated, and unreliable memory.

Is it possible to completely eliminate AI hallucinations?

While you can reduce them to near-zero with RAG and automated reasoning, the creative nature of AI makes it a tool that requires human oversight. The "magic vs. accuracy" trade-off means that as long as the AI is generating creative copy, it will always have the potential to wander. It is a tool for drafting, not an autonomous oracle.

What is the best workflow for fact-checking AI-generated content?

A robust workflow involves three stages: 1. Source verification, where you confirm every citation against a primary document; 2. A logic audit, where you test if the AI’s reasoning holds up under scrutiny; and 3. A human "sanity check" to ensure the tone and facts align with your brand’s standards.